Meanwhile everybody playing ‘↵Watch Dogs‘ (Ubisoft Montreal 2014) seems to know about the dealer’s showroom in ↑The Loop where you can easily steal a ↑Scafati GT, the most lamborghini-ish of all ↑cars in the game. There are videos on YouTube and more information around the Net explaining the feat. Just walk into the store, enter a car, drive it through the glass panes of one of the floor-to-ceiling windows, and off you go. I already had driven two Scafati GTs, stolen on the street, but nevertheless wanted to see the showroom.

When I reached it in an orange ↑336-TT—it already was that dirty when I picked it up at a Motel’s parking lot—I saw that there were only two cars on display in the vast room. Which gave me an idea …

How about filling up the showroom with sportscars, instead of stealing them from there? There’s no ↵suitcase to be found at the station, so something else has to be done in the game.

The 336-TT to my eye is a mix out of a Porsche 959/969, 911 and an Opel GT—but the front’s shape definitely resembles a Lamborghini Miura. So it makes a nice pair with the Diablo/Gallardo and something else mix the Scafati GT is. Both being orange was a lucky coincidence. … the guy on the left doesn’t like orange or just has no sense at all for automotive history.

Having the first two arranged nicely I set out to get the next sportscar. But when I returned with it, both orange cars had disappeared. Instead there was only one red Scafati GT now. As someone has said in a comment somewhere—it’s not ↑GTA, and there’s no place for storing cars. Well, let’s see …

Here you can see me just having delivered number three. Meanwhile it got dark and rain poured. The trick seems to be not to walk too far away from the showroom. As The Loop is a glitzy and wealthy ‘hood, chances are that you’ll find sportscars in close vicinity.

The best I could achieve was to get six cars into the showroom. The two Scafati GTs which already were there, a red and a black one; a silvergrey ↑Boxberg R1; two ↑Sunrims, one red and one yellowish green: and a ↑Papavero Stealth Edition. The green Sunrim was the end of the streak, because the police followed me after I had stolen it by dragging its possessor from the steering wheel. After my release from custody the cars of course were gone again. In the ↑words of the immortal Freddie Mercury: ‘I’m gonna go go go there’s no stopping meeee’ …

Once more I managed to cram six cars into the showroom. See how neatly I parked them in order to get more cars into my indoor parking lot? You guessed it, they disappeared. Enough is enough, I am leaving this place—I heard that there’s a Papavero dealership in the ↑Mad Mile—maybe their showroom is more stable …

No mods or cheats employed.

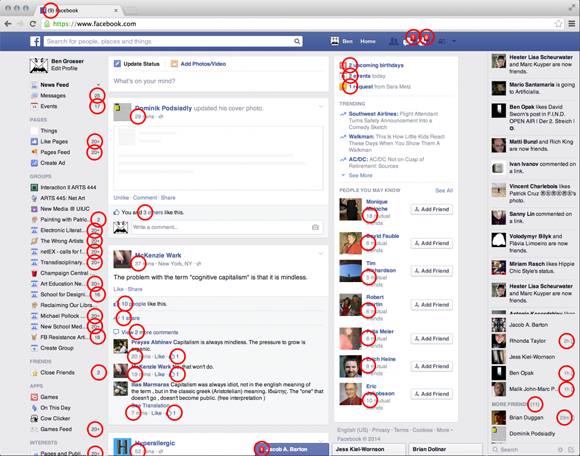

quantification prescribing interaction

Artist and composer ↑Ben[jamin] Grosser, currently teaching at the School of Art & Design, University of Illinois at Urbana-Champaign, two days ago has published his fine article “↓What do metrics want? How quantification prescribes social interaction on Facebook.” Here’s the abstract:

Abstract

The Facebook interface is filled with numbers that count users’ friends, comments, and “likes.” By combining theories of agency in artworks and materials with a software studies analysis of quantifications in the Facebook interface, this paper examines how these metrics prescribe sociality within the site’s online social network. That prescription starts with the transformation of the human need for personal worth, within the confines of capitalism, into an insatiable “desire for more.” Audit culture and business ontology enculturate a reliance on quantification to evaluate whether that desire has been fulfilled. These conditions compel Facebook’s users to reimagine both self and friendship in quantitative terms, and situates them within a graphopticon, a self-induced audit of metricated social performance where the many watch the metrics of the many. The theoretical analyses presented are further considered and examined in practice using the author’s artistic software, Facebook Demetricator. In use by thousands worldwide since late 2012, this software removes all metrics from the Facebook interface, inviting the site’s users to try the system without the numbers and to see how that removal changes their experience. Feedback from users of Facebook Demetricator illuminates how metrics activate the “desire for more,” driving users to want more “likes,” more comments, and more friends. Further, the metrics lead users to craft self-imposed rules around the numbers that guide them on how, when, and with whom to interact. Facebook Demetricator, through its removal of the metrics, both reveals and eases these patterns of prescribed sociality, enabling a social media culture less dependent on quantification.

fix at pleasure

In addition to his substantial contributions to science (see Bogolyubov, Mikhailov & Yushkevich 2007) the eminent swiss mathematician and physicist ↑Leonhard Euler (1707-1783) also wrote texts accessible to the general public. Eulers didactics are absolutely admirable because you can dive into both ‘Elements of Algebra’ (1770) and ‘Letters of Euler on different subjects in physics and philosophy addressed to a german princess’ (1768-1774) without presuppositions, without any previous knowledge whatsoever. His ‘Elements of Algebra’ begin at virtually nothing and then build up to unforeseen heights. Here is in full the beginning of chapter one of section one of part one, which simply is headed ‘Of mathematics in general:’

1. Whatever is capable of increase or diminution, is called magnitude, or quantity.

A sum of money therefore is a quantity, since we may increase it or diminish it. It is the same with a weight, and other things of this nature.

2. From this definition, it is evident that the different kinds of magnitude must be so various, as to render it difficult to enumerate them and this is the origin of the different branches of Mathematics, each being employed on a particular kind of magnitude. Mathematics, in general, is the science of quantity; or, the science which investigates the means of measuring quantity.

3. Now, we cannot measure or determine any quantity, except by considering some other quantity of the same kind as known, and point out their mutual relation. If it were proposed, for example, to determine the quantity of a sum of money, we should take some known piece of money, as a louis, a crown, a ducat, or some other coin, and show how many of these pieces are contained in a given sum. In the same manner, if it were proposed to determine the quantity of a weight, we should take a certain known weight; for example, a pound, and ounce, &c., and then show how many times one of these weights is contained in that which we are endeavouring to ascertain. If we wished to measure any length, or extension, we should make use of some known length, such as a foot.

4. So that the determination, or the measure of magnitude of all kinds, is reduced to this: fix at pleasure upon any one known magnitude of the same species with that which is to be determined, and consider it as the measure or unit; then, determine the proportion of the proposed magnitude to this known measure. This proportion is always expressed by numbers; so that a number is nothing but the proportion of one magnitude to another, arbitrarily assumed a unit.

5. From this it appears that all magnitudes may be expressed by numbers; and that the foundation of all the Mathematical Sciences must be laid in a complete treatise on the science of numbers, and in an accurate examination of the different possible methods of calculation. (Euler 1822 [1770]: 1-2 | bold emphasis mine)

Now compare this to what ↑Henri Poincaré wrote on ‘science and hypothesis’—for the full passage of the text see ↵poincaré on sts:

[…] the position held by hypothesis was seen ; it was recognised that it is as necessary to the experimenter as it is to the mathematician.

[…] we should examine with the utmost care the role of hypothesis ; we shall then recognise not only that it is necessary, but that in most cases it is legitimate. We shall also see that there are several kinds of hypotheses; that some are verifiable, and when once confirmed by experiment become truths of great fertility; that others may be useful to us in fixing our ideas; and finally, that others are hypotheses only in appearance, and reduce to definitions or to conventions in disguise. The latter are to be met with especially in mathematics , and in the sciences to which it is applied. From them, indeed, the sciences derive their rigour ; such conventions are the result of the unrestricted activity of the mind, which in this domain recognises no obstacle. For here the mind may affirms because it lays down its own laws ; but let us clearly understand that while these laws are imposed on our science, which otherwise could not exist, they are not imposed on Nature.

[…] the aim of science is not things themselves, as the dogmatists in their simplicity imagine, but the relations between things; outside those relations there is no reality knowable. (Poincaré 1905 [1902]: xxi-xxiv)

And for the sake of it, here is the German original of the above Euler-quote, ‘Von den mathematischen Wissenschaften überhaupt:’:

1.

Erstlich wird alles dasjenige eine Größe genennt, welches einer Vermehrung oder einer Verminderung fähig ist, oder wozu sich noch etwas hinzusetzen oder davon wegnehmen läßt.

Diesemnach ist eine Summa Gelds eine Größe, weil sich dazu setzen und hinweg nehmen läßt.

Ingleichen ist auch ein Gewicht eine Größe und dergleichen mehr.2.

Es giebt also sehr viel verschiedene Arten von Größen, welche sich nicht wohl herzehlen laßen; und daher entstehen die verschiedene Theile der Mathematic, deren eine jegliche mit einer besondern Art von Größen beschäftiget ist, indem die Mathematic überhaupt nichts anders ist als eine Wißenschaft der Größen, und welche Mittel ausfündig macht, wie man dieselben ausmeßen soll.3.

Es läßt sich aber eine Größe nicht anders bestimmen oder ausmeßen, als daß man eine Größe von eben derselben Art als bekannt annimt, und das Verhältniß anzeiget, worinnen eine jegliche Größe, von eben der Art, gegen derselben steht.

Also wann die Größe einer Summa Gelds bestimmt werden soll, so wird ein gewißes Stück Geld als z. E. ein Gulden, ein Rubel, ein Thaler, oder ein Ducaten und dergleichen für bekannt angenommen, und angezeigt wie viel dergleichen Stücke in gemeldeter Summa Gelds enthalten sind.

Eben so wann die Größe eines Gewichts bestirnt werden soll, so wird ein gewißes Gewicht als z. E. ein Pfund, ein Centner, oder ein Loth und dergleichen für bekannt angenommen, und angezeigt wie viel derselben in dem vorigen Gewicht enthalten sind.

Soll aber eine Länge oder eine Weite ausgemeßen werden, so pfleget man sich darzu einer gewißen bekannten Länge, welche ein Fuß genennet wird, zu bedienen.4.

Bey Bestimmungen, oder Ausmeßungen der Größen von allen Arten, kommt es also darauf an, daß erstlich eine gewiße bekannte Größe von gleicher Art fest gesetzet werde (welche das Maaß, oder die Einheit, genennet wird) und also von unserer Willkühr lediglich abhängt; hernach daß man bestimme in was für einem Verhältniß die vorgegebene Größe gegen dieses Maaß stehe, welches jederzeit durch Zahlen angezeigt wird, so daß eine Zahl nichts anders ist als das Verhältniß, worinnen eine Größe gegen eine andere, welche für die Einheit angenommen wird, steht.5.

Hieraus ist klar, daß sich alle Größen, durch Zahlen ausdrücken laßen, und also der Grund aller Mathematischen Wißenschaften darin gesetzt werden muß, daß man die Lehre von den Zahlen, und alle Rechnungs-Arten, so dabey vorkommen können, genau in Erwegung ziehe, und vollständig abhandele.

brandnew ancient games

Obviously I am not at all informed, let alone up to date—granted. But then again this wasn’t really to be expected. Not that I’d have any time for it whatsoever, but nevertheless last Saturday I suddenly decided that it was high time to finally play ‘Grand Theft Auto V’ (GTA 5 | Rockstar 2013 [sort of 2013])—in a minute I’ll come back to why I so suddenly longed for GTA 5. As I like having my games on physical data storage media, neatly arranged on the shelf, I set out towards my regular shop. The game already hit the store-shelves in September 2013, so my hopes were high that I’d get it at a heavy discount. Even triple-A titles like that rapidly drop prizes and currently you can get e.g. ‘Dishonored’ (Arkane Studios 2012), ‘Assasin’s Creed III’ (Ubisoft Montreal 2012a), or ‘Far Cry 3’ (Ubisoft Montreal 2012b) as absolute bargains. Already from just halfways through the shop I could identify the distinctive GTA design on the cover of a dozen or so boxes displayed on the according shelf. When I stood right in front of it I gasped and thought, ‘that I do call a prize-drop!’ as the GTA 5 box said € 5,-. Very much in doubt, and restraining myself from looking if they offered GTA 4 for € 4,-, I picked up the case and read … ‘pre-order box.’ My, my, those marketing- and shop-keeping geniusses these days—displaying pre-order boxes for a game that was released more than a year ago. Toodle-oo … wait a minute, it also says ’27th January,’ but GTA 5 was released in September ’13 … and then it dawned on me. Quickly checking the Wikipedia-entry on my phone confirmed my worsed fears: Back then it was released for the PlayStation 3 and Xbox 360, and towards the end of January 2015 they will release it for the PC. And there they went, my hopes for getting a triple-A title on a bargain. In January they will charge us PC-gamers the full prize for a game 16 months old. But then again I still am playing ‘Quake Live’ (QL | id Software 2010), which essentially is ‘Quake III Arena’ (Q3A | id Software 1999), a meanwhile 15 years old game.

Inevitably the price for the PC-version of GTA 5 naturally will drop, too—so what? Why then does that bother me at all? Because of two reasons on slightly different but interconnected levels.

First, the universes of especially big, story-driven games are mythical territories in which the members of the gaming scenes collectively partake. (Knorr 2012a, b, c) It’s not only the action and the storyline, but all kinds of artistic content and the atmosphere a game generates which are embraced. This embracing works together with, acts out and adds upon an inner universe—a personal conglomeration of ambiences, sentiments, æsthetics, and narrative content, built from a lifetime of digesting popular culture, and of assimilating its modes of representation into ones own conceptions of life. Titles like those of the GTA-series are among those at the forefront of this, because they are choke-full with allusions and citations. This looms an ever larger associative universe and weaves its fabric tighter and tighter. The tradition of ↵easter eggs, little or bigger things within the gameworld, ↵intentionally hidden there to be discovered by the players is one of these qualities. And here’s the problem. Especially in open-world games exploring is decisive. Exploring the vast game world and then sharing the explorations and experiences with others online. Well, everything in GTA 5 is already found and done—by the console jockeys. The vast number of let’s-play-, stunt-, and easter-egg-videos at YouTube is ample testimony of that. Of course I could ignore everything on GTA 5 at YouTube and the Net in general, but that’s not the way titles like that are played. Just like most of the people do not never talk about movies or TV-series they’ve seen. Quite to the contrary. Talking about movies and learning more about them and their backgrounds from a variety [pun not intended] of sources is an essential dimension of how media are ‘consumed.’ The same is true for computer games and much of the interaction around them naturally happens online. Since September 2013 PC-gamers are shut out from this interaction in respect to GTA 5. Well, now you could say: Why haven’t you bought a console? Which leads me to my second point.

Of course I could’ve bought a console, or all of them. I did not, mainly because I do not like them very much, I prefer the PC. But my personal preferences are not important here—if at all then only a portion of their backgrounds. The PC is an open all-purpose system. Beyond that my main machine was especially built to cope with high-end games. Now, I’ve already got an all-purpose machine which is superior to most other systems when it comes to running games … why should I buy a special-purpose machine which is inferior, too [for the moment I do not take into account specialities like the Wii- or Kinect-technology]? The only reason would be because parts of the industry urge me to do so. E.g. by not publishing certain game titles for PC.

Seven years ago ↵John Romero perfectly expressed what I more or less thought at that time, too:

Next-gen console is big but its future isn’t too bright with the emergence of cheap PC multi-core processors and the big change the PC industry will go through during the next 5 years to accommodate the new multi-core-centric hardware designs. My prediction is that the game console in the vein of the PS3 and XBOX 360 is going to either undergo a massive rethink or go away altogether.

Well, either that massive rethink has taken place or we were both dead wrong. Leaving the rethink aside, what has happened, and what was not taken in consideration at all back then, is the strategy of creating ever more closed systems instead of open ones, and consolidating them by the powers of the market. The blatant example of course is Apple’s closed technological ecosystem iPhone, iPad, iTunes which has begun to swallow their lap- and desktop computers and operating systems, too. Fabulous hardware intentionally crippled. And consoles are closed ecosystems by design. There interpretative flexibility is smaller and creativity dwindles because practices of appropriation like e.g. modding (Knorr 2012c) are made harder. But still there’s the PC. Hell, yes, but if I want to play an A-list title, no matter if I officially downloaded it or bought it on DVD, I have to log on to closed ecosystems like Games for Windows, Uplay, Rockstar Social Club, or whatyouhave. If, and only if, the title is out for PC at all.

Erh … didn’t think that that would develop into such a rant :) There’s way more to it, but I’ll leave it at that for the time being, I think.

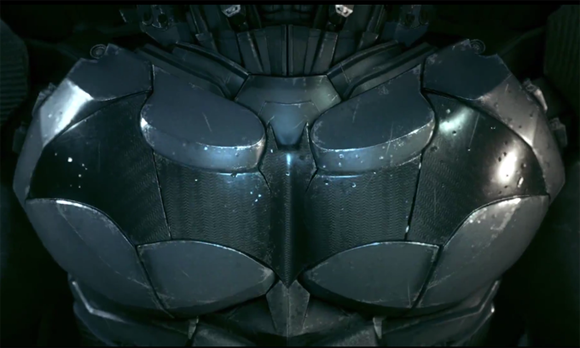

the arkham knight

After the disappointment ‘Batman: Arkham Origins’ (Warner Bros. Games Montreal 2013) was [and ↑Jaz is with me here, giving good reasons, why it was disappointing], Rocksteady Studios is at the helm again and ‘Batman: Arkham Knight’ (Rocksteady 2015) is scheduled for release on 02 June 2015. After their glorious games ‘Batman: Arkham Asylum’ (2009) and ‘Batman: Arkham City’ (2011) I am absolutely convinced that Rocksteady will do it again. Just like The Creative Assembly just recently saved the Alien-franchise with the fantastic ‘Alien: Isolation’ (2014) after Gearbox Software nearly had ruined it with the godawful ‘Aliens: Colonial Marines’ (2013).

But I digress. What I really want to talk about, again, is the ever growing impact of what I call the ↵cyberpunk discourse—up to it hijacking other genres. For example the cyberpunk discourse infested the zombie genre [see ↵omega legend for that, and, more elaborated, including computer games, and just recently printed, Knorr 2014].

And there’s yet more hijacking.

Have you noticed that today’s two biggest superhero franchises from the two big comic book universes, Marvel and DC, are about superheroes without any superpowers whatsoever? Instead, both Iron Man and Batman heavily rely on technology. With Iron Man it’s obvious. His superhuman abilities are due to his exoskeleton armour. It’s all the suit as Steve ‘Captain America’ Rogers (Chris Evans) ↑tried to point out to Tony ‘Iron Man’ Stark (Robert Downey jr.) in ‘The Avengers’ (Whedon 2012):

Steve Rogers: Big man in a suit of armour. Take that off, what are you?

Tony Stark: Genius, billionaire, playboy, philanthropist.

Steve Rogers: I know guys with none of that worth ten of you. I’ve seen the footage. The only thing you really fight for is yourself. You’re not the guy to make the sacrifice play, to lay down on a wire and let the other guy crawl over you.

Tony Stark: I think I would just cut the wire.

Steve Rogers: Always a way out … You know, you may not be a threat, but you better stop pretending to be a hero.

Tony Stark: A hero? Like you? You’re a lab rat, Rogers. Everything special about you came out of a bottle!

Both their superhero personas are products of technology. And here’s yet more to it, too. To the list of what Tony Stark sees himself to be we could add ‘potential alcoholic’ and ‘troubled personality’ among other things. In a nutshell, despite of his armour’s fine design he’s not a streamlined hero, but a hardboiled character. So is Bruce ‘Batman’ Wayne. His deeply traumatized noir personality is drastically rendered in Christopher Nolan’s dark movie trilogy (2005, 2008, 2012) which truly made the franchise into what it now is [↵hugo strange gives a bit of further background].

But back from the depths of hardboiled film noir character psychology to the, equally deep-reaching, surfaces of design. Reviewing the until now available still and moving images from ‘Batman: Arkham Knight’ gave me the impression that Batman gets ever more similar to Iron Man. Compare Batman’s new costume, a suit of armour really, to the ↑Iron Man Suit Mark VII designed by Phil Saunders and digitally sculpted by Josh Herman for ‘The Avengers’ movie:

Watch the ‘Arkham Knight’ announce trailer ‘↑Father to Son‘ and the gameplay trailer ‘↑Evening the Odds‘ to see more of the suit and its exoskeletal robotic qualities in operation. And to see the Batmobile. It really is the vehicle which drove cyberpunkish posthumanism ideas deep down into ‘Arkham Knight.’ Very revealing in this respect is what Dax Ginn, Brand Marketing Producer at Rocksteady Studios, ↑said at E3 2014:

And then bringing in the Batmobile at any time. You know, we wanted to make sure that the Batmobile feels like an extension of Batman‘s abilities, that augments Batman‘s abilities—but is only ever like a second away, a button-press away. You want to be driving, one button-press and it’s there, and you seamlessly transition into it. You want to get out of it, you just eject out at any time. So that kind of interplay between man and machine was really the heart and soul of the entire design of the game. (from 00:00:58 on | bold emphasis mine)

beyond cyberpunk revisited

Back in August 2006 here at xirdalium I wrote ↵an entry [worthwhile, lots of associations] on Gareth Branwyn’s and Peter Sugarman’s 1991 HyperCard classic ‘↑Beyond cyberpunk‘ [← that’s a link to the complete web version]. Now Gareth has published his book ‘Borg like me’ (Branwyn 2014a) containing a treasure trove of his writing from the past three decades, including an essay (Branwyn 2014b) on the making-of of ‘Beyond cyberpunk’. Boingboing has ↑said essay online, and here are some excerpts [water on my mills]:

Peter [Sugarman] and I [Gareth Branwyn] began having regular phone conversations about hypermedia and how it might change the nature of storytelling, media delivery, and information organization and navigation. I’d always been struck by [anthropologist and] cyberneticist ↑Gregory Bateson‘s idea of forever being mindful to balance “rigor and imagination” in one’s pursuit of knowledge. It seemed to Peter and me that hypermedia provided a perfect environment to pursue ideas deeply and rigorously, while engineering in fun, whimsy, and a sense of the unexpected—a kind of orchestrated chaos, a vibrant little media ecology. We began kicking around ideas for a collaborative HyperCard project we could do together. […]

At the time (1990), the Internet was not yet in the media spotlight. So-called cyberculture (where these near-future speculative worlds met the bleeding edge of real-life technoculture) was in its heyday, but known only on the cultural fringes. We could sense that all things “cyber” were about to bust into the mainstream and we wanted to chart the course cyberculture had taken, from its sci-fi and early hacker roots, through the Internet, and soon, we suspected, into everyday, mainstream life.

digital china on cyberanthropology

Well, here it is—to my knowledge the first ↑English-language review of my book ‘Cyberanthropology’ (2011) [which is in German]. ↑Annika Pissin of Lunds Universitet’s project ↑Digital China was so kind as to write a fine review in ↑the project’s blog :)

Here’s the ↵collection of all the reviews I’m aware of.

berners-lee on snowden

It’s been almost eight years that I ↵last quoted ↑Sir Timothy Berners-Lee, the inventor of the Web, here at xirdalium. On 1st August 2006 I republished the following sentence from ↑his blog:

When I invented the Web, I didn’t have to ask anyone’s permission. Now, hundreds of millions of people are using it freely. I am worried that that is going [to] end in the USA.

Well, it’s more than high time again. Here’s what ↑thenextweb wrote recently:

Sir Tim Berners-Lee, the father of the World Wide Web, has come out in support of former NSA contractor Edward Snowden, saying that the PRISM surveillance program leak did the world a favor. As a guest editor on the BBC’s Radio 4 Today program, Berners-Lee called Snowden a “really important part of the system.”

In ↑another article at the BBC I found this:

Mr Snowden [speaking via video link in Austin at the South by Southwest Interactive conference in early March 2014] received a warm reception from the audience, and the question-and-answer session included words of praise in an email from internet pioneer Sir Tim Berners-Lee, who said his actions were “profoundly in the public interest”.

And here’s the whole Tim Berners-Lee on guest editing Today at BBCR4Today, from 26 December 2013:

snowden on games

Here is a passage from chapter two of ↑Glenn Greenwald‘s excellent newest book “No Place to Hide: Edward Snowden, the NSA, and the U.S. Surveillance State” (2014), which I’ve recently read:

Finally, Snowden gave me an answer that felt vibrant and real. “The true measurement of a person’s worth isn’t what they say they believe in, but what they do in defense of those beliefs,” he said. “If you’re not acting on your beliefs, then they probably aren’t real.”

How had he developed this measure for assessing his worth? Where did he derive this belief that he could only be acting morally if he was willing to sacrifice his own interests for the sake of the greater good?

“From a lot of different places, a lot of experiences,” Snowden said. He had grown up reading large amounts of Greek mythology and was influenced by Joseph Campbell’s The Hero with a Thousand Faces, which, he noted, “finds common threads among the stories we all share.” The primary lesson he took away from that the book was that “it is we who infuse life with meaning through our actions and the stories we create with them.” People are only that which their actions define them as being. “I don’t want to be a person who remains afraid to act in defense of my principles.”

This theme, this moral construct for evaluating one’s identity and worth, was one he repeatedly encountered on his intellectual path, including, he explained with a hint of embarrassment, from video games. The lesson Snowden had learned from immersion in video games, he said, was that just one person, even the most powerless, can confront great injustice. “The protagonist is often an ordinary person, who finds himself faced with grave injustices from powerful forces and has the choice to flee in fear or to fight for his beliefs. And history also shows that seemingly ordinary people who are sufficiently resolute about justice can triumph over the most formidable adversaries.”

He wasn’t the first person I’d heard claiming video games had been instrumental in shaping their worldview. Years earlier, I might have scoffed, but I’d come to accept that, for Snowden’s generation, they played no less serious a role in molding political consciousness, moral reasoning, and an understanding of one’s place in the world than literature, television, and film. They, too, often present complex moral dilemmas and provoke contemplation, especially for people beginning to question what they’ve been taught. (Greenwald 2014: chpt. 2)

graeber on play

Why do animals play? Well, why shouldn’t they? The real question is: Why does the existence of action carried out for the sheer pleasure of acting, the exertion of powers for the sheer pleasure of exerting them, strike us as mysterious? What does it tell us about ourselves that we instinctively assume that it is? (Graeber 2014)